In 1997, an MIT graduate student named Latanya Sweeney stunned the privacy world by matching publicly available voter rolls with hospital records stripped of names and addresses to identify the supposedly anonymous medical history of the then-governor of Massachusetts. Three years later, she expanded on that finding by demonstrating that 87 per cent of the U.S. population could be uniquely identified using just three data points: ZIP code, date of birth and gender.

My Globe and Mail op-ed notes that Ms. Sweeney’s work shaped privacy frameworks worldwide, which responded with de-identification standards designed to manage the risk by removing obvious identifiers, applying statistical tests and treating the resulting data as safe to use. Indeed, a core tenet of modern privacy regulation rests on the premise that de-identified data can be used, disclosed and commercialized without compromising individual privacy.

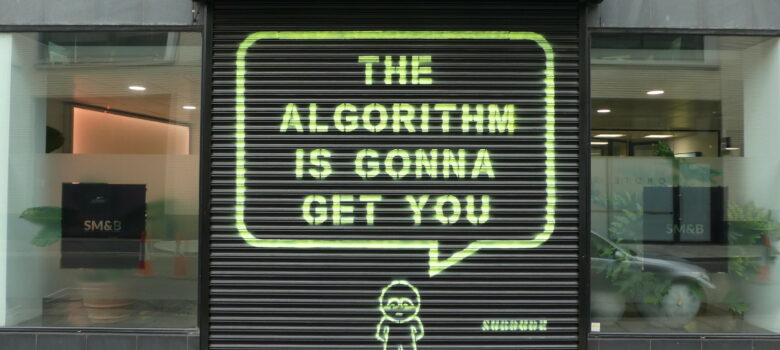

Artificial intelligence has broken that premise. AI systems equipped with real-time search capabilities and inferential power can now accomplish in minutes what once took skilled researchers days. A February study from ETH Zurich demonstrated that AI agents could match anonymized online accounts to real-world identities with up to 90-per-cent precision, replicating in minutes what would take a skilled human investigator hours. What Ms. Sweeney identified as a vulnerability is increasingly an operational reality for anyone with an internet connection and an AI chatbot.

This matters urgently for Canada. AI Minister Evan Solomon has promised an updated national AI strategy that features modernized privacy rules. While it may be tempting to turn to strengthened protections that have been debated for years, however, AI is changing the privacy discussion in ways that make the path forward more complicated than simply restarting the old reform effort. Getting this right requires grappling with both sides of the AI equation: what goes in and what comes out.

On the input side, there has been a notable global shift toward more permissive treatment of personal information used for AI training. Japan just amended its privacy law with the explicit goal of becoming the easiest country in the world in which to develop AI, replacing consent requirements with opt-out mechanisms. Britain recently loosened restrictions on automated decision-making and created broader research exemptions. The European Union has proposed regulations that would ease data-processing rules for AI-model training and narrow the scope of data subject rights. The direction is unmistakable: the world’s leading privacy jurisdictions are softening their rules to accommodate AI development. Canada will face pressure to follow.

The output side of AI presents a privacy challenge that has received far less attention but may prove more consequential. The concern is not what personal data goes into AI systems but rather what personal information comes out. Modern AI systems can access publicly available data from multiple sources, combine fragments that are individually harmless, and draw inferences that re-identify individuals from information that was never intended to be personally identifiable.

Consider what this means for the de-identification framework at the heart of Canadian privacy law reform. Earlier proposals would have prohibited organizations from re-identifying de-identified data. But that prohibition targeted deliberate acts of re-identification. It did not contemplate a world in which an AI system reassembles an identity from fragments scattered across the open internet as part of a routine prompt. The legal test for whether data could be used to identify an individual assumed a relatively stable technological environment. AI makes what was once unthinkable trivially easy — a mere structural byproduct of how it works.

The Canadian response may require treating the two sides of the AI equation differently. A more permissive approach to training data inputs, grounded in universally applicable limitations and meaningful transparency, could help Canada remain competitive without abandoning its core commitments. But that flexibility on inputs needs to be paired with genuinely innovative approaches to the harms arising from AI outputs. This means moving beyond prohibitions on deliberate re-identification toward regulatory tools that address the structural capacity of AI systems to reconstruct personal information from non-personal data. Accountability measures, inference auditing and restrictions on the aggregation and disclosure of inferred personal profiles need to be part of the regulatory landscape.

The privacy community and policymakers have been slow to recognize this shift, anchoring the discussion primarily on how personal information enters AI systems. But if Canada’s AI strategy and privacy reform are to meet the moment, it must confront the harder truths: that de-identification, as we have understood it for decades, may no longer work and that our conversations about privacy need to radically change.

I’m open, Mr. Geist.

But knowledge is power. It always has been. Even your article notes that, whatever an AI can accomplish a human can also accomplish, although it might take longer.

Governments and spies collect information on their citizen’s because they know this.

Now that most of the rest of us have acknowledged the obvious, maybe we can move forward on the real discussion about whether or not citizen’s should really be giving away so much of their privacy to these people.

This is a very important perspective, especially in today’s AI-driven world. The shift from “what data is collected” to “what insights AI can generate” completely changes how we should think about privacy. The example of re-identification through inference really highlights how traditional frameworks may no longer be enough.

What stands out is that the real risk is no longer just data exposure, but data reconstruction through intelligence. This makes it critical for policymakers to rethink regulations beyond consent and de-identification, and focus more on AI accountability and output control.

A much-needed conversation as AI adoption continues to accelerate globally.

. This means moving beyond prohibitions on deliberate re-identification toward regulatory tools that address the structural capacity of AI systems to reconstruct personal information from non-personal data.