As elected officials place Internet giants such as Google and Facebook under an increasingly intense microscope, the pressure mounts on those companies to play more proactive roles in policing content on their networks. In recent weeks, the demands have come from seemingly every direction: privacy commissioners seeking rules on the removal of search results, politicians calling for increased efforts to address fake news on Internet platforms, and Internet users wondering why the companies are slow to takedown allegedly defamatory or harmful postings.

My Globe and Mail op-ed notes Internet companies can undoubtedly do more, but laying the responsibility primarily at their feet poses its own risks as governments and regulators effectively cede responsibility for content moderation and policing to private, for-profit companies. In doing so, there is a real chance that the Internet giants will become even more powerful, limiting future competition and entrenching an uncomfortable reliance on private organizations for activities that are traditionally conducted by courts and regulators.

Contrary to some claims, there has never been a fully hands-off approach to Internet regulation. All Internet companies – like any other company – respond to court orders to take down content or disclose the identity of their subscribers or users. The major companies such as Google, Facebook, Microsoft and Twitter also regularly release detailed transparency reports that provide insights into lawful requests and takedown efforts.

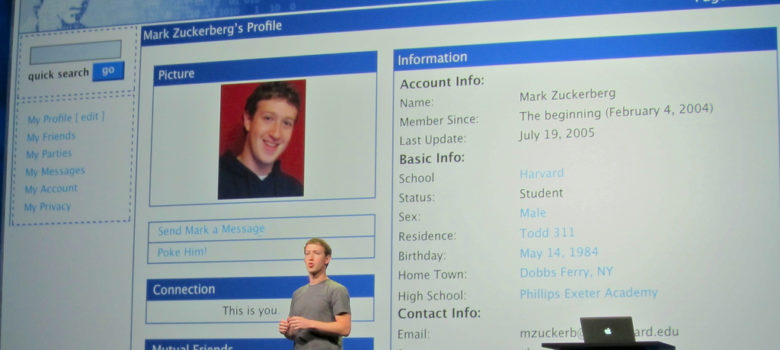

Some companies have proactively attempted to block or mute certain content. Facebook CEO Mark Zuckerberg emphasized his company’s success in combatting terrorist materials last week in his U.S. congressional appearance, noting that the technology is sufficiently effective to ensure that the vast majority of posts are never viewed by anyone.

Similarly, YouTube, the world’s largest video site, automatically flags copyright infringing content identified by rights holders, which is then muted, taken down, or used to generate revenues for the rights holder through advertising. These efforts at content moderation require significant resources with hundreds of millions of dollars invested in employees and technologies that can use automation to help facilitate content identification.

Before politicians or regulators mandate additional requirements, we should recognize the risks associated with outsourcing responsibility for content moderation to Internet companies.

First, mandating broader content moderation and takedowns virtually ensures that the big players will only get bigger given the technology, research, and personnel costs that will be out of the reach of smaller companies. At a time when some of the Internet companies already seem too big, content moderation of billions of posts or videos would reaffirm their power, rendering it virtually impossible for upstart players to compete.

In fact, we are already perilously close to entrenching the large Internet players. At a conference on large-scale content moderation held earlier this year in California, there was a wide gap between companies such as Google and Facebook (which deploy thousands of people to the task) and smaller companies such as Medium, Reddit, and Dropbox, which have hundreds of millions of users, but have only a handful of people focused on content moderation issues.

Second, there remains considerable uncertainty with what politicians actually want. Last week, members of Congress alternately took turns criticizing Facebook for not doing enough to take down content or for doing too much. For example, Representative David McKinley wanted to know why Facebook was slow to remove posts promoting opioids, while Representative Joe Barton raised concerns about Facebook taking down conservative content.

Similar issues arise in other countries. For example, Facebook faces potential liability in the millions of dollars for failing to remove hate content in Germany, but earlier this month a German court ordered the company to restore comments the company deemed offensive.

Third, supporters of shifting more responsibility to Internet companies argue that our court systems or other administrative mechanisms were never designed to adjudicate content-related issues on the Internet’s massive scale. Many Internet companies were never designed for it either, but we should at least recognize the cost associated with turning public adjudication over to private entities.

Leaving it to search engines, rather than the courts, to determine what is harmful and should therefore be removed from search indexes ultimately empowers Google and weakens our system of due process. Similarly, requiring hosting providers to identify instances of copyright infringement, removes much of the nuance in copyright analysis, creating real risks to freedom of expression.

Advocates of increased regulation are quick to point out that the Internet is not a no law land. Yet if the determination of the legality of online content is left largely to private Internet companies, we may be consigning courts and regulators to a diminished role while strengthening the Googles and Facebooks as concern grows over excessive power in the hands of a few Internet giants.

The issue always finds me of two minds.

On the one hand, as a firm believer in free enterprise, I am loathe to wish more government regulation on legitimate businesses unless they have shown an inability or unwillingness to regulate them by government fiat.

At the same time, the power of the Internet is unlike any other medium of mass communications in our history So far, the web’s goliaths such as Facebook and Google have shown an almost total disregard and disdain for the trust placed in them by users (who are their customers)

Perhaps a reasonable first step would be to outlaw cookies, the tracking tool websites use to secretly gather oodles of information about visitors. Indeed, in the case of Facebook, you don’t even have to be a customer but simply visit a site with a Facebook “button” that takes you to its site. I can only shudder thinking about what Google does with the info it collects.

To say the users of Facebook are the customers is incorrect; Facebook customers are actually the likes of Cambridge Analytica who pay for data. However, the common conception of Facebook users as the product is, in my opinion, hyperbolic and cynical. The services offered by Facebook should be seen as an incentive; something offered in exchange for the use of private information. The waters are muddied a little by the fact that Facebook is tracking users AND non-users via cookies. The other major issue I see is that private data is provided to Facebook’s customers without informed consent from the users; and without any consent at all from non-users.

that’s not accurate either. Facebook’s customers are its advertisers. What makes FB advertising so powerful is that an advertiser can easily target its audience based on the likes/dislikes of FB users. The advertiser does not actually see or has access to a user’s private data unless the user chose to share it. FB does not share/sell users data.

As for cookies, you really should read up on what cookies are and how they work – it’s as simple as disallowing third party cookies.

I once saw what NASDAQ does to address the same kind of large-scale problem, in their case doubtful trades, but

– there was settled legislation and case law on the subject

– the users were members of the national Association of Securities Dealers, a self-governing body, and

– there was money on the line.

That’s arguably true of some of YouTube, but not of Facebook, or of YouTube in general.

My description of the NASDAQ approach is at https://leaflessca.wordpress.com/2017/04/05/how-nasdaq-solved-youtubes-problem/

–dave

The kind of “regulation” being sought these days is not really possible.

You can try to achieve it, but what you’re actually going to get is mass censorship, false positives, further loss of privacy, and Orwellian control issues.

Methinks the Orwellian control issues reside at Facebook and Google themselves.

Only partly correct.

It would be the cooperation of tech services with agencies of government, police and intelligence that would form a more complete picture.

The resulting scenario could even be dangerous.

It’s possible to achieve a good standard of behavior where one has a single criteria to meet. A “bright line” standard that one not commit fraud in financial dealing is not easy to achieve, but is self-funding, and pays one to achieve it., as NASDAQ found.

If one is trying to meet multiple criteria, it can be like the biblical character trying to worship both God and Mammon. if they’re incommensurable, you _will_ fail.

Although courts started at some point in time to issue orders, many companies have refused to take down content, and many companies have refused to identify customers. And even where they have not refused, this is not the same thing as being “regulated”. The ONLY long term solution will be to eventually abandon all of our misguided efforts at regulating so called “illegal speech”.

Or we can keep fighting, which we will, if we leave it up to the lawyers.

See https://www.canlii.org/en/ca/fct/doc/2017/2017fc114/2017fc114.html for an example of an extortion-scheme company trying to avoid taking down material. They were located in Romania, and refused to obey a Canadian court-order, but the Romanian courts enforced it upon them. The company was eventually shut down.